By Alberto Betella, co-founder of RSS.com

True story

In the nineties, when I was in high school in Italy, our teacher gave us homework assignments to write essays on certain topics. At the time, everything was handwritten: text, homework, exams. Not everyone had a computer yet, so it was customary for students to just write everything by hand.

It was 1995 and I owned a Commodore Amiga 600 (the best computer ever) with a word processor that had a spell checker. Because my handwriting, when I write fast, is almost unintelligible, and because I type faster than I write, I started delivering most of my homework typed on my computer.

After a few weeks, my teacher realized that some word processors included a spell checker. His class was all about language and grammar, so typos and grammatical errors in essays were part of the evaluation. This was a problem. Was a homework written with a computer that includes an automatic spell checker still valid, or was it cheating?

He did something very clever: he decided to think aloud and discuss the topic with our class. We debated it. I don’t remember every nuance, but I remember the outcome. We ultimately agreed that a spell checker was not so different from a printed vocabulary (which we were all allowed to use when writing our essays), just faster to look up. So as long as it was clear that a spell checker was available, the essay was valid. The tool had changed but the work was still ours.

That was thirty years ago. The same conversation is happening again today, just at a much bigger scale.

The spell checker got a lot more powerful

Artificial Intelligence keeps spreading across all sorts of use cases, and conversations around its ethical use are slower than its adoption. This gap is clearly visible in the podcasting and media industry, where AI-generated content has increasingly appeared, up to the point that the industry has started calling “AI slop” the mass-produced content that exists not to inform or entertain, but to game monetization systems. These shows flood podcast directories, compete for visibility with human-made content, and often carry no indication that they are synthetic.

But just like my spell checker in 1995, the use of AI per se in producing media like a podcast episode is not just legitimate. It is genuinely exciting.

Voice cloning for multilingual content is perhaps the best example. A podcaster who creates brilliant content in Italian can now deliver the same episode in English, Spanish, French, or Mandarin, in their own voice. The original journalism is human. The translation leverages AI. Cultural diversity and accessibility made possible by technology.

Post-production tools like noise removal, filler-word elimination, audio leveling, and transcript generation are now standard practice. Nobody would argue that using a noise gate is “AI content”. These are conventional production techniques, just like the good old spell checker in our favorite writing app.

So we cannot put it all in the same bucket. The line needs to be drawn around the substance of the content. If a human conceived it, created it, and curated it, but AI helped produce or distribute it, that’s a tool being used well. If AI conceived it, generated it, and published it with minimal human involvement, and there is no disclosure, that’s where the concern lies. The problem is not AI. The problem is opacity.

Back in my classroom, the solution was simple: acknowledge the tool, then let the work speak for itself. The same principle applies here.

Platforms and regulators have already moved

Apple Podcasts requires creators to disclose AI use in audio and metadata when AI generates a material portion of the episode (Content Guidelines, Sections 1.11 and 1.12).

YouTube requires disclosure of realistic altered or synthetic content, but explicitly exempts scriptwriting, ideation, and audio cleanup.

Spotify classifies content in three tiers: human-created, AI-assisted, and fully AI-generated. They prohibit unconsented voice cloning.

EU AI Act (Article 50, effective August 2026) requires that deepfakes and AI-generated text on matters of public interest be disclosed. Fines reach up to 15 million EUR or 3% of global turnover.

FTC treats deceptive AI-generated endorsements and fake reviews as violations of Section 5 of the FTC Act.

The direction is consistent: transparency is becoming a requirement, not a suggestion. Podcasters who disclose now are future-proofing themselves.

The open ecosystem has been debating how

Podcasting 2.0 is a set of new features and standards that make podcasting better for everyone. For over a year, the community has been discussing ways to disclose AI in podcasts within the open ecosystem, yet none of the proposals have reached consensus.

Ideas have ranged from granular tags specifying exactly which AI tools were used, to percentage-based disclosure, to broader multi-purpose tags covering AI alongside sponsorships and compensation, to simple attributes on existing tags.

Each proposal has merit. But the debate keeps stalling around the same tensions: how detailed should disclosure be, should it use a new tag or extend an existing one, and who moves first when apps won’t implement what hosts don’t use and hosts won’t use what apps don’t show.

It is a bit like my classroom in 1995, except with more people in the room and no teacher to moderate the discussion.

Making it real

While the debate continued, some companies decided to ship.

Spreaker (owned by iHeart Media) launched an AI disclosure checkbox in their dashboard at the end of 2025. The disclosure was visible on the podcast’s public page, and appended to the episode description, but it was not written to the RSS feed.

In early March 2026, RSS.com shipped the same concept but took it further by writing the disclosure signal directly into the open RSS feed. A few days later, Spreaker followed, adopting the same tag and attribute.

That distinction matters because when disclosure lives only in a hosting dashboard, it helps the company use it internally. When it lives in the RSS feed, it travels with the podcast. Every app that reads the feed can see it. It is portable, verifiable, and platform-agnostic.

The open RSS feed is the backbone of podcasting. It is what makes podcasting decentralized and not controlled by any single platform. When AI disclosure data lives in the feed:

- It is portable: the disclosure travels with the podcast regardless of which app plays it

- It is verifiable: anyone can inspect the feed and see the signal

- It is platform-agnostic: it works across Apple Podcasts, Spotify, Pocket Casts, Overcast, and every other app that reads RSS

- It is persistent: it is part of the permanent record, not a UI element that can disappear

How it works under the hood

The AI disclosure in RSS feeds currently lives in the podcast:txt tag, a flexible container from the Podcasting 2.0 namespace that fits exactly this kind of use: metadata that does not yet have a dedicated tag.

The approach follows the same pattern as itunes:explicit, the tag Apple introduced in 2005 that has been working well for over twenty years. A simple question with a clear answer: yes or no. Podcasters self-assess, just like they self-assess whether their content is explicit. We rely on good faith, and good faith has worked for two decades.

There is one nuance worth spelling out. Because AI disclosure is new, silence has to be respected as silence. An episode published years ago was never asked about AI use. A podcaster who has not yet updated a feed has not been asked either. So RSS.com distinguishes between three states: yes, no, and not-yet-answered. The first two write the signal to the feed. The third writes nothing, because nothing has been declared. This keeps the data honest without retroactively labelling an entire back catalogue.

Is it perfect? No. But it does not need to be perfect to be valuable.

By putting the question in front of every podcaster, we create something that did not exist before: a choice. Before the prompt, there was no disclosure because there was no mechanism. Now there is. And once the mechanism exists, the answer carries meaning: saying yes is a transparent declaration, saying no is an equally intentional one, and not answering at all is a deliberate silence. All three are signals. That distinction matters.

This is also important for hosting companies and platforms. Regulators increasingly expect platforms to foster transparency around AI-generated content. If a hosting company does not even offer a disclosure mechanism, it is hard to argue that it takes AI transparency seriously. The disclosure prompt is not only a feature for podcasters, but also compliance infrastructure for the platforms that serve them.

And it opens another door: once disclosure exists, platforms can decide what to do when AI is not voluntarily disclosed but probably should be. That alone is a powerful tool for content trust and brand safety.

So when should you disclose?

It is all about the substance. If AI is the performer, delivering the content your listeners came for, whether that is the voice, the music, or the synthesis of the episode: answer yes. If AI is a production tool, supporting your human performance with cleanup, effects, or behind-the-scenes work: answer no.

For example: a fully AI-narrated episode where no human voice is present? Answer yes. Using AI to remove background noise and generate your transcript? Answer no.

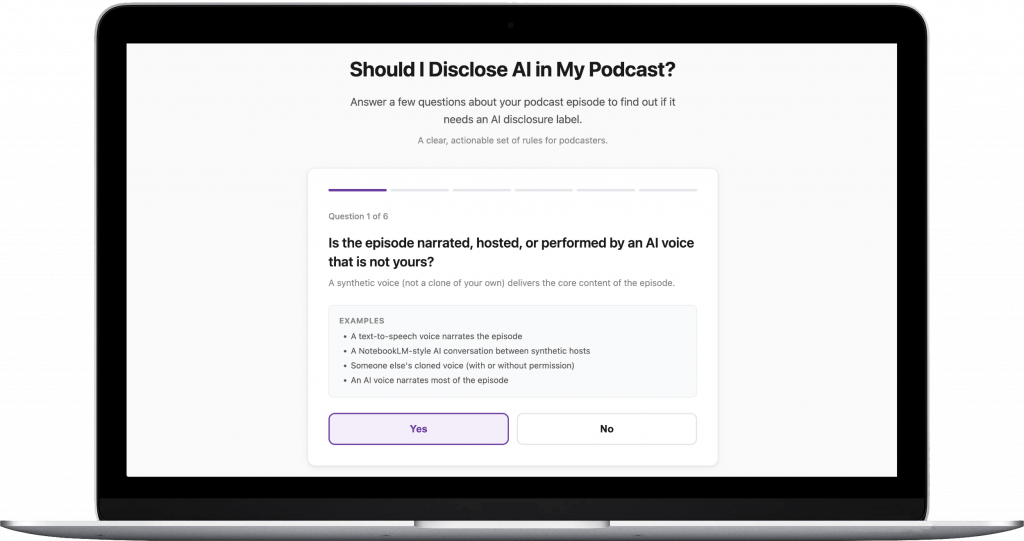

I wrote a decision framework and set of guidelines for AI Disclosure in Podcasting that covers most cases in detail. And to make it even more practical, I launched what I called The Substance Test: a quick web questionnaire that walks you through a few simple questions about your episode and tells you whether you should disclose or not. It takes less than a minute.

What comes next

This simple disclosure approach is a starting point. As the industry matures, more structured metadata may emerge: which aspects of production used AI, what tools were involved, whether the voice is cloned or fully synthetic. But starting with a simple, universally adoptable signal creates the foundation that richer metadata can build on.

App-side filtering. Once enough feeds carry the AI disclosure signal, podcast apps can offer listeners real choices: filter for human-only content, surface AI-enhanced shows, or simply display the label and let listeners decide.

Industry-wide adoption. If more podcast hosting companies add AI disclosure to their RSS feeds, coverage expands significantly. This creates a positive feedback loop: more data in feeds gives apps reason to build filtering features, app features give listeners the ability to make informed choices, listener demand incentivizes more hosts to adopt disclosure, and advertisers gain the transparency they need to make informed decisions about where their budgets go.

AI disclosure belongs in the RSS feed because openness, portability, and decentralization are not just technical features of RSS, they are the values that podcasting was built on.

My Italian teacher got it right in 1995. He didn’t ban the spell checker, but he didn’t ignore it either. He brought it into the open, discussed it with the class, and let us agree on a rule. That is exactly what podcasting needs to do with AI: acknowledge the tool, agree on transparency, and let the work speak for itself.

Resources

- AI Disclosure in Podcasting: a clear, actionable set of guidelines for podcasters

- The Substance Test: a quick questionnaire to help you decide whether to disclose AI

- Example of an AI-generated episode on RSS.com with disclosure in the RSS feed

- podcast:txt tag documentation (Podcasting 2.0 namespace)

About the author

Alberto Betella is the co-founder of RSS.com and holds a PhD in Emotion AI. Prior to RSS.com, he served as Chief Technology Officer at Alpha, the European Moonshot Factory created by the telco giant Telefonica. Back in 2006, he developed Podcast Generator, one of the very first open-source web apps for podcast self-hosting, empowering a wide community of podcasters for over a decade.